How to lose a cluster in 28 volumes

Synopsis

An EKS cluster, promises to be transparent of how many IPs it can provide. Its team, understands its limitations and proceeds to use it in production. Enter EC2 volumes, confident in its role make life better for everybody…

…until it backfires.

The Goosechase

It was just another day deploying new pods in EKS. We found that some pods were failing to schedule for the following reason:

Odd. We barely have workloads running in the cluster. There should be ample available IP addresses.

Some Context

Our EKS cluster is using default AWS VPC CNI.

It provides native integration with AWS VPC where pods and hosts are located at the same network layer and share the network namespace. The IP address of the pod is consistent from the cluster and VPC perspective.

In other words, each node and pod consumes an IP from our VPC subnets.

On top of this, our nodes are m6i.4xlarge in size, which should provide us with a substantial pool of 234 IP addresses to allocate to our pods. This is calculated by:

max number of network interfaces * number of IPs per interface

In reference to AWS Elastic Network Interfaces, each of our instances should give us:

8 * 30 = 240 (subtracted to 234 because AWS uses some IPs to run their services)

Did AWS just dupe me?

Troubleshooting

We took a closer look at one node having this issue. It contained approximately 50 pods, which is significantly below the maximum pod limit of 234. This was rather perplexing.

We verified that our cluster’s VPC had an abundance of available IP addresses.:

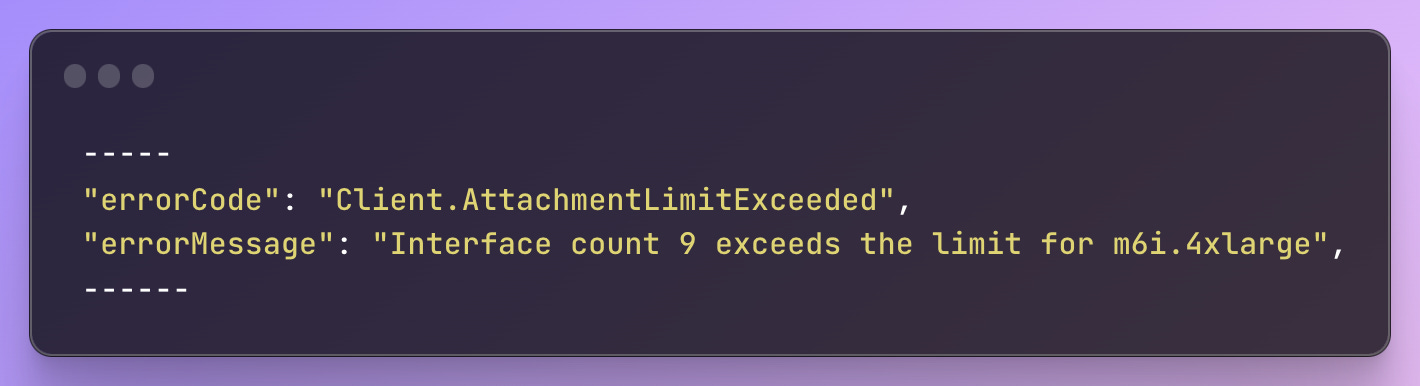

Eventually, we turned to AWS CloudTrail to investigate any AttachNetworkInterface API calls. We noticed the following error:

Strange. How could we have reached the maximum limit for interfaces while simultaneously exhausting available IP addresses with only around 50 pods? That’s when we discovered this on the Node’s network tab:

Aha! Let’s do some simple ENI calculations again:

2 * 30 = 60 (subtracted to ~50 because AWS uses some IPs to run their services)

The puzzle started to come together because we only had 2 network interfaces available. Other nodes were in similar states albeit different ratios of attached and attaching state.

So what gives? Are AWS network attachments highly unreliable?

The Surprise Factor

Every guide we found in the wild focused on troubleshooting this purely from a networking perspective. They emphasized exploring aspects such as adjusting max-pods arguments, tinkering with ipamd configurations, and other network-related considerations.

Yet, we discovered that the problem extended beyond the realm of networking: the Nitro System volume limits.

Most of these instances support a maximum of 28 attachments. For example, if you have no additional network interface attachments on an EBS-only instance, you can attach up to 27 EBS volumes to it. If you have one additional network interface on an instance with 2 NVMe instance store volumes, you can attach 24 EBS volumes to it.

The majority of the latest EC2 instances, including our m6i.4xlarge, were built on the Nitro System. And yes, our workloads are using EBS volumes, which were consuming the attachment limits assigned to each node.

Due to the absence of available attachment slots, network interfaces were unable attach, resulting in the loss of approximately 30 IP addresses each.

I’m curious as to why AWS set up mixed limits on EC2 instances.

The Workaround

To address our specific use case, we made the decision to configure the --volume-attach-limit argument to 20 in our aws-ebs-csi-driver configuration. This adjustment ensures that we always have 8 attachment slots for ENIs.

It's important to note that this configuration applies cluster-wide and may not be suitable for clusters with mixed instance types that have varying ENI limits. Fortunately, our current circumstances allow this because our instance types are homogeneous.

We have to look into long-term options in fixing this. One potential approach is to potentially reduce our reliance on EBS volume-dependent applications. Another worth considering is using larger volumes and potentially reduce the number of attachments required.